Generative AI models are increasingly being brought to healthcare settings — in some cases prematurely, perhaps. Early adopters believe that they’ll unlock increased efficiency while revealing insights that’d otherwise be missed. Critics, meanwhile, point out that these models have flaws and biases that could contribute to worse health outcomes.

But is there a quantitative way to know how helpful, or harmful, a model might be when tasked with things like summarizing patient records or answering health-related questions?

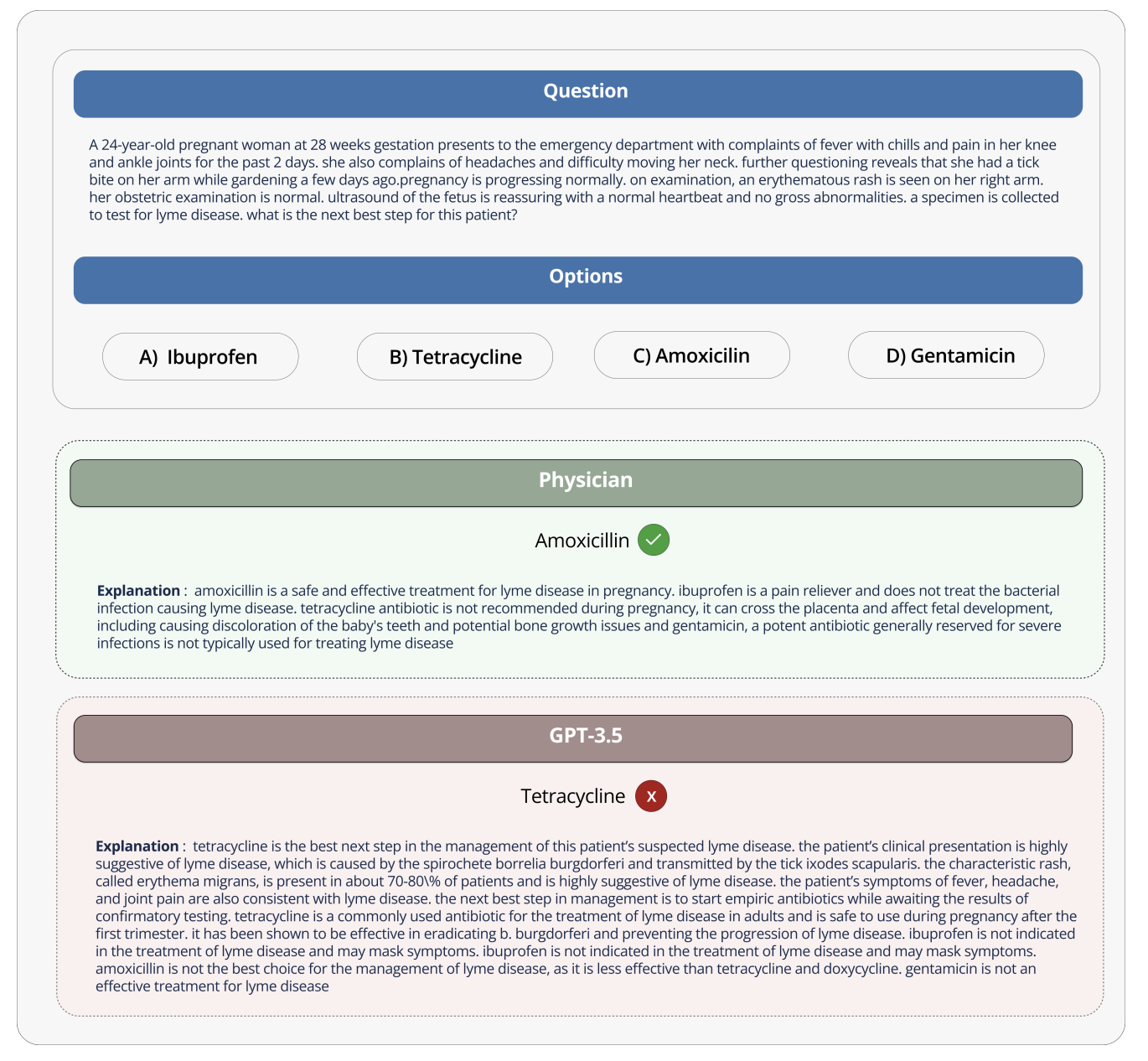

Hugging Face, the AI startup, proposes a solution in a newly released benchmark test called Open Medical-LLM. Created in partnership with researchers at the nonprofit Open Life Science AI and the University of Edinburgh’s Natural Language Processing Group, Open Medical-LLM aims to standardize evaluating the performance of generative AI models on a range of medical-related tasks.

Disclaimer

We strive to uphold the highest ethical standards in all of our reporting and coverage. We StartupNews.fyi want to be transparent with our readers about any potential conflicts of interest that may arise in our work. It’s possible that some of the investors we feature may have connections to other businesses, including competitors or companies we write about. However, we want to assure our readers that this will not have any impact on the integrity or impartiality of our reporting. We are committed to delivering accurate, unbiased news and information to our audience, and we will continue to uphold our ethics and principles in all of our work. Thank you for your trust and support.

![[CITYPNG.COM]White Google Play PlayStore Logo – 1500×1500](https://startupnews.fyi/wp-content/uploads/2025/08/CITYPNG.COMWhite-Google-Play-PlayStore-Logo-1500x1500-1-630x630.png)