In January, Elon Musk told investors Tesla faces a choice: “hit the AI chip wall or build a fab.” Weeks later, Tim Cook warned that surging memory costs would bite into Apple’s margins for the foreseeable future. A 12GB memory module that cost Apple roughly $30 at the start of 2025 had climbed to nearly $70 by December. When the CEO of the world’s most valuable company and the builder of the world’s largest AI training site are both sounding the alarm on the same problem, it is worth paying attention.

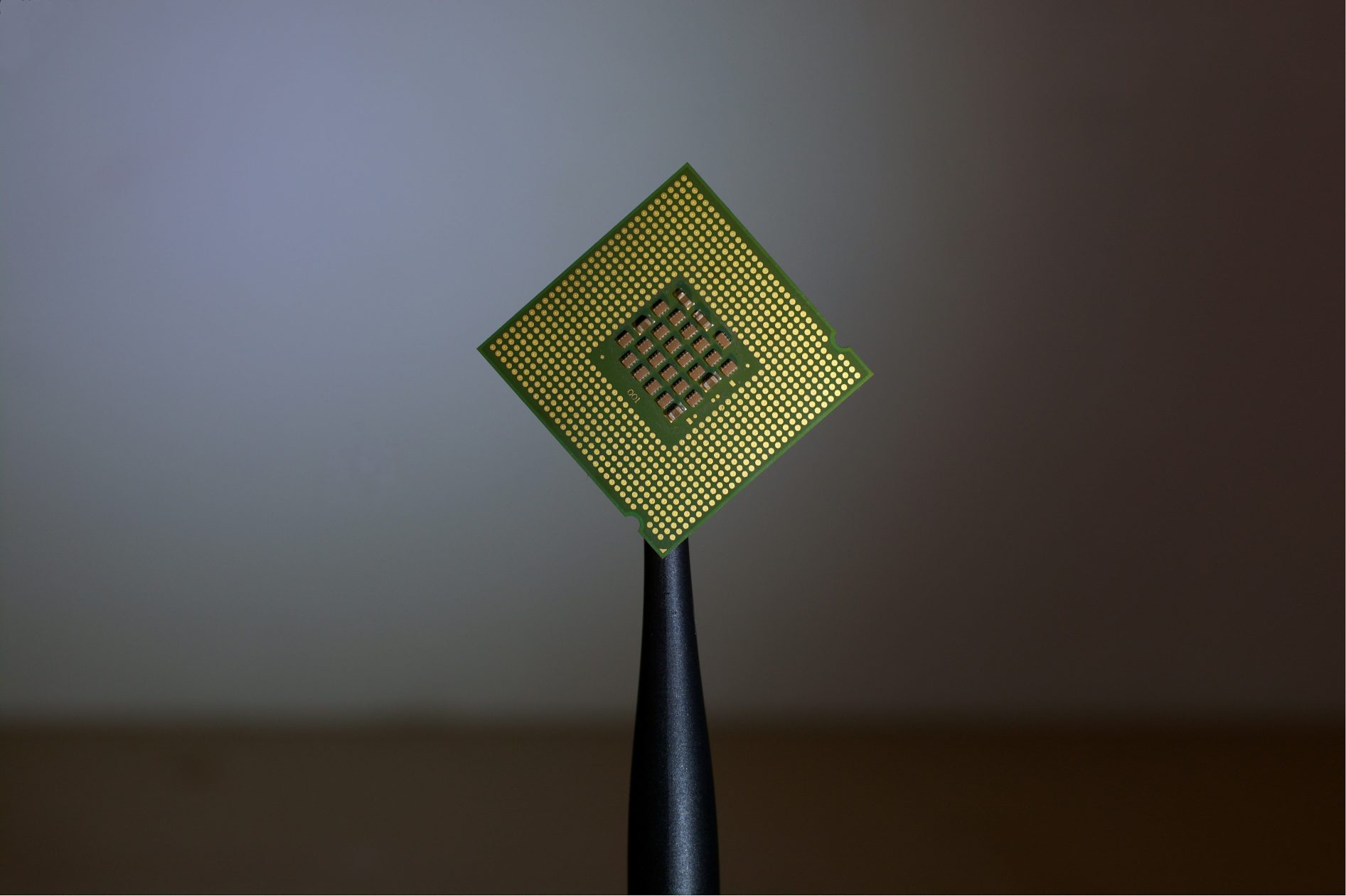

The problem is structural. Every major AI lab, hyperscaler and sovereign AI programme is racing to secure GPU capacity. Microsoft, Google, Meta and Amazon are collectively spending north of $600 billion a year on data-center infrastructure, with capital expenditure projected to push toward $700 billion by the end of 2026. That money flows overwhelmingly to the same three memory manufacturers and the same handful of advanced packaging facilities. SK Hynix, Samsung and Micron are running at full tilt, yet Micron’s CEO has admitted the company can meet only 50 to 66 percent of demand from several key customers. TSMC’s advanced packaging lines are booked solid through 2026. The bottleneck is tightening.

The knock-on effects are now reaching consumers. DRAM prices surged 75 percent between December 2025 and January 2026 in what the industry has started calling “RAMmageddon.” Smartphone bill-of-materials costs are up as much as 25 percent at the low end. PC vendors including Dell, HP and Lenovo are warning of 15 to 20 percent price hikes. IDC has revised 2026 global smartphone shipment forecasts down by 2.1 percent. The chip shortage is no longer an enterprise procurement headache. It is a consumer price crisis in the making.

The reflex response has been to build more fabrication plants. Intel is pouring $32 billion into two new Arizona fabs. Samsung has a $17 billion facility under construction in Texas. TSMC’s $40 billion Phoenix complex is only just beginning to ramp up. Eighteen new fab projects broke ground in 2025 alone. But here is the uncomfortable truth: even the most aggressive construction schedule takes three to four years from groundbreaking to volume production. Fabs coming online in 2028 will not ease the pressure that is pushing up the price of your next phone.

There is also a deeper irony at work. While the world scrambles to manufacture more chips, a staggering amount of existing compute sits idle. Most colocation facilities operate at between 30 and 50 percent GPU utilisation. Best-in-class hyperscalers struggle to sustain rates above 60 to 70 percent. Enterprises routinely overprovision by two or three times their actual requirements. One Fortune 500 financial institution left $120 million in GPU infrastructure unused for two years. The AI compute crunch is just as much a distribution problem as a production problem.

This is where decentralised compute enters the picture. The concept is straightforward: rather than concentrating all processing power in a few massive facilities owned by a few massive companies, aggregate the GPUs that already exist across data centers, universities, research institutions and enterprises worldwide. Let idle capacity work. The technology to do this at scale has matured rapidly. Decentralised compute platforms now offer cost savings of 70-90% compared to hyperscaler pricing for batch workloads, inference tasks and short-duration training runs.

Over 1,170 active decentralised infrastructure projects are operating globally as of early 2025, up from 650 two years prior. The sector’s revenue is projected to surpass $150 million in 2026. More importantly, the model addresses the problem from the demand side rather than the supply side. It does not require breaking ground on a single new facility. It does not compete for the same scarce memory chips and packaging capacity that are already stretched to breaking point. It puts hardware that has already been manufactured, shipped and installed to productive use.

None of this means new fabs are unnecessary. Long-term capacity expansion is essential. But treating fabrication as the only answer ignores the millions of high-performance GPUs collecting dust in racks around the world. The shortage is acute today, and construction is a multi-year proposition. Before we pour another hundred billion into concrete and clean rooms, it is worth asking a simpler question: what if we used what we already have?

By David Sherman, Head of Ecosystem at io.net

![[CITYPNG.COM]White Google Play PlayStore Logo – 1500×1500](https://startupnews.fyi/wp-content/uploads/2025/08/CITYPNG.COMWhite-Google-Play-PlayStore-Logo-1500x1500-1-630x630.png)