Firefly, Adobe’s family of generative AI models, doesn’t have the best reputation among creatives.

The Firefly image generation model in particular has been derided as underwhelming and flawed compared to Midjourney, OpenAI’s DALL-E 3, and other rivals, with a tendency to distort limbs and landscapes and miss the nuances in prompts. But Adobe is trying to right the ship with its third-generation model, Firefly Image 3, releasing this week during the company’s Max London conference.

The model, now available in Photoshop (beta) and Adobe’s Firefly web app, produces more “realistic” imagery than its predecessor (Image 2) and its predecessor’s predecessor (Image 1) thanks to an ability to understand longer, more complex prompts and scenes as well as improved lighting and text generation capabilities. It should more accurately render things like typography, iconography, raster images and line art, says Adobe, and is “significantly” more adept at depicting dense crowds and people with “detailed features” and “a variety of moods and expressions.”

For what it’s worth, in my brief unscientific testing, Image 3 does appear to be a step up from Image 2.

I wasn’t able to try Image 3 myself. But Adobe PR sent a few outputs and prompts from the model, and I managed to run those same prompts through Image 2 on the web to get samples to compare the Image 3 outputs with. (Keep in mind that the Image 3 outputs could’ve been cherry-picked.)

Notice the lighting in this headshot from Image 3 compared to the one below it, from Image 2:

From Image 3. Prompt: “Studio portrait of young woman.”

Same prompt as above, from Image 2.

The Image 3 output looks more detailed and lifelike to my eyes, with shadowing and contrast that’s largely absent from the Image 2 sample.

Here’s a set of images showing Image 3’s scene understanding at play:

From Image 3. Prompt: “An artist in her studio sitting at desk looking pensive with tons of paintings and ethereal.”

Same prompt as above. From Image 2.

Note the Image 2 sample is fairly basic compared to the output from Image 3 in terms of the level of detail — and overall expressiveness. There’s wonkiness going on with the subject in the Image 3 sample’s shirt (around the waist area), but the pose is more complex than the subject’s from Image 2. (And Image 2’s clothes are also a bit off.)

Some of Image 3’s improvements can no doubt be traced to a larger and more diverse training data set.

Like Image 2 and Image 1, Image 3 is trained on uploads to Adobe Stock, Adobe’s royalty-free media library, along with licensed and public domain content for which the copyright has expired. Adobe Stock grows all the time, and consequently so too does the available training data set.

In an effort to ward off lawsuits and position itself as a more “ethical” alternative to generative AI vendors who train on images indiscriminately (e.g. OpenAI, Midjourney), Adobe has a program to pay Adobe Stock contributors to the training data set. (We’ll note that the terms of the program are rather opaque, though.) Controversially, Adobe also trains Firefly models on AI-generated images, which some consider a form of data laundering.

Recent Bloomberg reporting revealed AI-generated images in Adobe Stock aren’t excluded from Firefly image-generating models’ training data, a troubling prospect considering those images might contain regurgitated copyrighted material. Adobe has defended the practice, claiming that AI-generated images make up only a small portion of its training data and go through a moderation process to ensure they don’t depict trademarks or recognizable characters or reference artists’ names.

Of course, neither diverse, more “ethically” sourced training data nor content filters and other safeguards guarantee a perfectly flaw-free experience — see users generating people flipping the bird with Image 2. The real test of Image 3 will come once the community gets its hands on it.

New AI-powered features

Image 3 powers several new features in Photoshop beyond enhanced text-to-image.

A new “style engine” in Image 3, along with a new auto-stylization toggle, allows the model to generate a wider array of colors, backgrounds and subject poses. They feed into Reference Image, an option that lets users condition the model on an image whose colors or tone they want their future generated content to align with.

Three new generative tools — Generate Background, Generate Similar and Enhance Detail — leverage Image 3 to perform precision edits on images. The (self-descriptive) Generate Background replaces a background with a generated one that blends into the existing image, while Generate Similar offers variations on a selected portion of a photo (a person or an object, for example). As for Enhance Detail, it “fine-tunes” images to improve sharpness and clarity.

If these features sound familiar, that’s because they’ve been in beta in the Firefly web app for at least a month (and Midjourney for much longer than that). This marks their Photoshop debut — in beta.

Speaking of the web app, Adobe isn’t neglecting this alternate route to its AI tools.

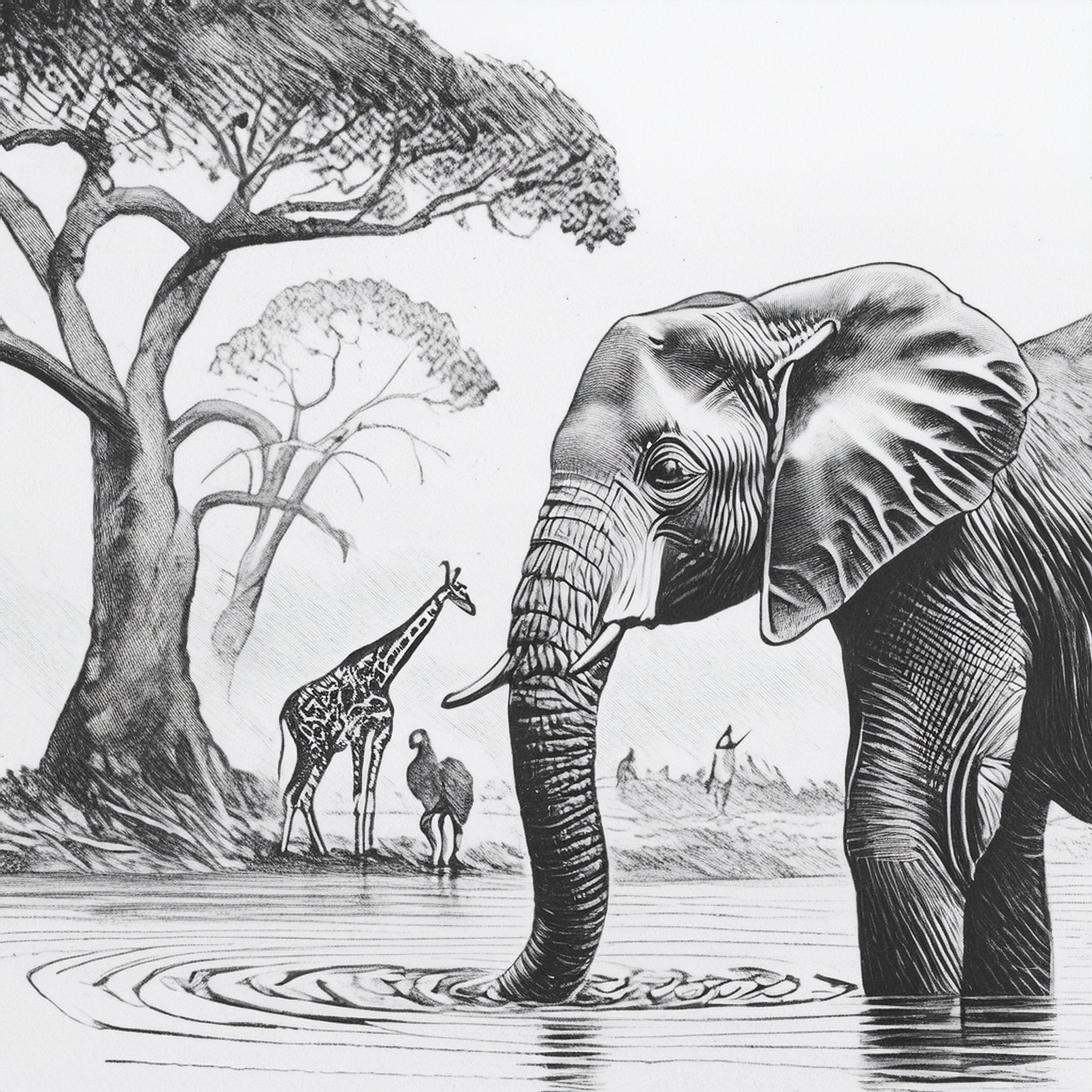

To coincide with the release of Image 3, the Firefly web app is getting Structure Reference and Style Reference, which Adobe’s pitching as new ways to “advance creative control.” (Both were announced in March, but they’re now becoming widely available.) With Structure Reference, users can generate new images that match the “structure” of a reference image — say, a head-on view of a race car. Style Reference is essentially style transfer by another name, preserving the content of an image (e.g. elephants in the African Safari) while mimicking the style (e.g. pencil sketch) of a target image.

Here’s Structure Reference in action:

Original image.

Transformed with Structure Reference.

And Style Reference:

Original image.

Transformed with Style Reference.

I asked Adobe if, with all the upgrades, Firefly image generation pricing would change. Currently, the cheapest Firefly premium plan is $4.99 per month — undercutting competition like Midjourney ($10 per month) and OpenAI (which gates DALL-E 3 behind a $20-per-month ChatGPT Plus subscription).

Adobe said that its current tiers will remain in place for now, along with its generative credit system. It also said that its indemnity policy, which states Adobe will pay copyright claims related to works generated in Firefly, won’t be changing either, nor will its approach to watermarking AI-generated content. Content Credentials — metadata to identify AI-generated media — will continue to be automatically attached to all Firefly image generations on the web and in Photoshop, whether generated from scratch or partially edited using generative features.

![[CITYPNG.COM]White Google Play PlayStore Logo – 1500×1500](https://startupnews.fyi/wp-content/uploads/2025/08/CITYPNG.COMWhite-Google-Play-PlayStore-Logo-1500x1500-1-630x630.png)