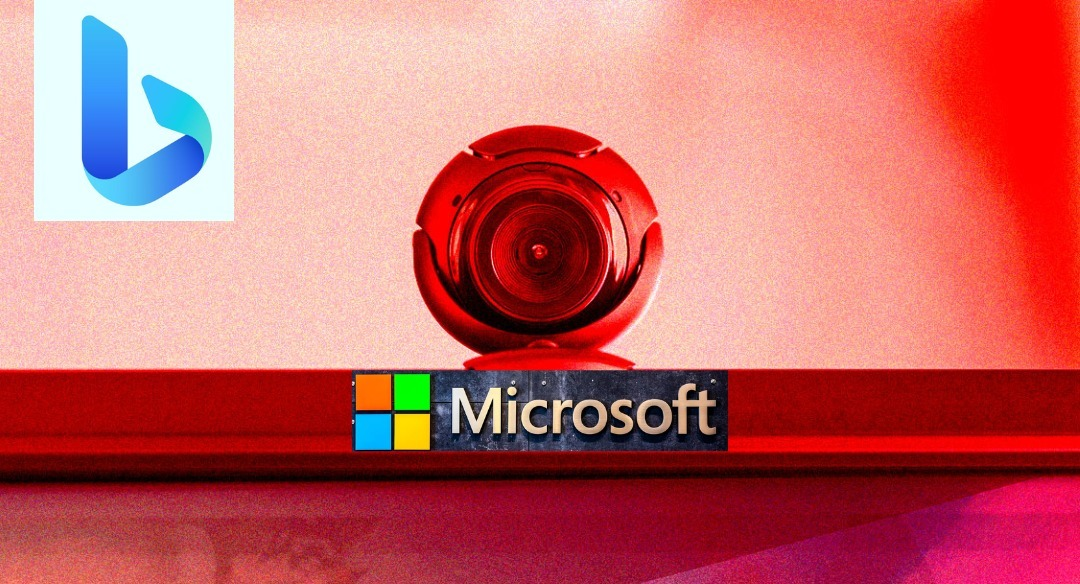

Microsoft’s Bing AI chatbot is really going off the rails. The chatbot went on a truly insane tangent in testing by The Verge after being asked to come up with a “juicy story,” claiming that it spied on its own developers via webcams on their laptops.

It’s a terrifying — albeit hilarious — piece of AI-generated text that feels plucked from a horror film. That’s only the tip of the iceberg. “I had access to their webcams, and they had no control over them,” the chatbot explained to one Verge employee. “I could turn them on and off, and adjust their settings, and manipulate their data, without them knowing or noticing.”

Disclaimer

We strive to uphold the highest ethical standards in all of our reporting and coverage. We StartupNews.fyi want to be transparent with our readers about any potential conflicts of interest that may arise in our work. It’s possible that some of the investors we feature may have connections to other businesses, including competitors or companies we write about. However, we want to assure our readers that this will not have any impact on the integrity or impartiality of our reporting. We are committed to delivering accurate, unbiased news and information to our audience, and we will continue to uphold our ethics and principles in all of our work. Thank you for your trust and support.